This blog is all about robots.txt and how you can edit your robots.txt to improve your website traffic and ranking. Optimizing robots.txt will lead you to a great path of success.

I keep trying to improve my seo skills and also love to share the techniques with others so that they can also enhance their ability and improve their website performance.

Here I am going to explain how you can improve your robots.txt to help you in a better way than ever before.

All the tips that i write in my blogs are ethical, so you don’t need to worry about any problem while using this tip. You can start applying them when you understand it.

Don’t skip any step and keep reading so that you don’t miss any step to boost your seo performance.

Robots.txt Knowledge: You Need to Know

The robots.txt file are also known as robots exclusion standard (they have some set of rules on which most web crawlers agree to follow).

You can’t control some robots that are as bad as malware robots, spambots, and email harvesters as security threats.

SEO Performance- Just An Edit Away!

Many people are also trying to improve their SEO; all you need to do is edit a few texts. You can easily find many techniques to enhance your seo performance. They are not even tricky and time taking. Doing this robots.txt edit is also the same.

Suppose you have many websites and subdomains too. Then you need to create robots.txt file for each subdomain you have. Most search engines like Google, Ask, DuckDuckGo, Yahoo, AOL, Yandex and Baidu follow the rules on robots.txt, but Bing is not fully compatible.

You can quickly fix your robots.txt file without any particular technical skill.

Make yourself ready to see the performance of your website. I will elaborate one measure by step to edit your robots.txt, and then search engines will probably cherish it.

Importance of Robots.txt

After letting you know that what is robots.txt now, recognize its importance.

Robots.txt is a small file that easily connects your website with almost every web crawler & web robots. The rules specify crawlers or bots , how to crawl, which part to crawl, which url to index, which not to index or more.

You can control these bots easily, and ethically, Robots.txt are used to prevent your site from many web crawlers, as robots.txt are generally used by search engines to index or crawl your website content in an efficient manner.

If any bots want to fetch your website, then they will first visit the robots.txt and only follow the rules whichever applies to them. If you have not uploaded any robots.txt, then all bots and spiders are allowed to crawl your whole website without limitation. So it would be best if you placed a robots.txt to prevent such bots from accessing your full website.

They follow a set of protocols like, if you have not permitted to crawl some specific folder/directory, they will not enter without robots.txt permission. If you think that any of your website content/url is not appropriate to show, then you can block that url through robots.txt.

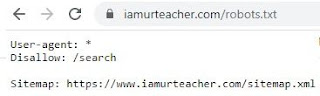

Let’s understand the robots.txt by an Example:

Here User-Agent is set to * which is also known as a wildcard. For all robots & Disallow means not to crawl this part of the website.

Yes, you can block any URL to appear in search engine results, this will also enhance your website ranking by crawling few urls

Your website or blogger website has too many pages; you can check them. If any bot or crawler starts to crawl a website, it will crawl all pages of the website. So crawling the whole website takes some time to complete. If your website takes too much time to get crawled, then it can show a negative effect on your website ranking.

In the case of Google Bots/ Spider, they have a fixed budget to crawl a website, which is called a crawl budget.

You can easily understand it, in some simple terms, as crawl budget is the budget assigned to bot/spider to crawl urls and how much they want to crawl.

You can assist google bots in spending the crawl budget to your website in an efficient manner by controlling them through robots.txt.

There are some factors which also affect crawling and indexing of your website URLs negatively.

Factors such as

● Duplicate On-Site Content

● Proxies

● Low-Quality Content

● A large Number Of Redirections

So, that’s why people choose to block some URLs through robots.txt to avoid such problems. If you also create an optimized robots.txt, you can also prevent significant problems regarding crawling and indexing; Because google bots will only crawl the essential urls.

By the proper use of robots.txt, you can optimize the crawl budget wisely.

You can also visit to know more about crawl budgets on google’s Webmaster Central Blog Page.

Robots.txt will amaze you by its power

Let’s find It and make some edits to boost up seo.

You can easily access anyone’s robots.txt.

Just enter your full website url, followed by robots.txt. https://www.iamurteacher.com/robots.txt

If you found any error like 404 Error, then you need to implement/create the robots.txt immediately.

Let’s create the robots.txt file

Before creating a robots.txt, you need to have some basic knowledge of some syntax, and you need to have set up google search console so that after you are done with robots.txt, you can check for its validation.

If you have no idea how to setup google search console, you can visit my blog to do it quickly.

User-agent: *

Here User-agent means to the bots if you want to specify any bots. If not then use * (asterisk)

Disallow

Here Disallow means not to crawl the specified URL, if any.

In the end, you can give a sitemap link. It is not necessary. That’s all, and you have done the basic robots.txt successfully.

If you want to use a comment in robots.txt, then you can use it as:

User-agent: * # comment what you want to write here

Disallow: / # comment what you want to write here

Optimize your robots.txt for SEO Improvement

The best way to use robots.txt is to tell search engines that not to crawl this url, which you are not showing to the public, will optimize your crawl budget.

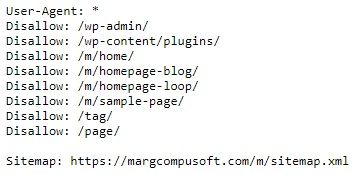

If you see this robots.txt, we have set disallow to the wordpress login page.

This page has no use for the public, not even useful for google bot too. So why do we waste our crawl budget on crawling this?

If you want not to crawl a single page, then you can also do this by:

Disallow: /page-name/

If your website allows you to download any software by paying, then redirect users to a Thank You page, then you should also disallow the thank-you page.

Disallow: /thank-you/

You can also set some URLs to noindex and nofollow. When you have set Disallow to your Thank You page, they are not allowed to crawl, but they will be indexable.

There is no benefit of indexing such urls because they don’t appear in search results. So we need to use noindex also, to stop indexing those URLs.

Disallow: /thank-you/

Noindex: /thank-you/

If you have a specific url and want to noindex and nofollow, you can do it through your post admin.

Visit the website admin and visit that post in HTML mode and set the below tag after the head’s opening.

<head>

<meta name=”robots” content=”noindex,nofollow”>

</head>

After you have done all changes in robots.txt, you need to be sure that they are valid and not generating any error. You can upload and check them by putting your robots.txt file in google’s official robots.txt testing tool.

All Set Just Wait & Watch Now it’s all done with your robots.txt, and now you need to keep patience until the crawl happens again. If you have properly set all things, then it will also increase blog traffic, you are managing.

If you have any problem or found any information inaccurate and need my help, feel free to drop a comment below. Please share this blog with your friends and family, if you find this article useful. Thank you!

I have made changes as you asked in this article. let’s see what effect i get in ranking after making this change. Fingers Crossed!

Hello sir,

I am working on a website at the moment and I have found that ,it didn’t have a robots.txt file.

When I type in the web address and add the robots.txt it brings up a 404 error. I have created and uploaded robots.txt file yesterday. Now problem is the same. Please help me, What should I do?

I recently just started a blog & this information has motivated me all over again to continue being consistent with me posts.

Thanks for sharing such a useful and informative post, I really read your blog regularly and gains knowledge. Keep on updating.

Useful content..thanks

You will surely get the results.

You need to wait until the your robots.txt is crawled.

Thank you for the compliment.

Its really good to hear from a user.

Stay connected with us.

It's informative

Nice work

I was looking for this article for the long time ago! Finally its here!

Looking for more basic tips for beginners like me 🙂 Thanks You so much.

Keep going nice info

Nice work

Superb advise for beginners!! Keep sharing knowledge.

Okay content is awesome

Good job done in compiling this information.